I work as a consultant, mostly for large software development organizations at public companies that maintain large systems that handle millions of transactions each day. My typical engagement is at least 1 year, no longer than 2 years. It’s long enough that I’m able to dig deep but it’s short enough that I get exposed to many different organizations. This gives me a unique and informed perspective on how software development works at a fair number of .Net shops. I’m kind of like Marlin Perkins, able to observe the natural behavior of cutting edge software development organizations in the wild.

One interesting ritual that I’ve seen practiced over and over is rite of THE CORE FRAMEWORK. Some organizations actually go as far at to call it THE FRAMEWORK. Others are content to simply call it core. Regardless of what you call it, it’s usually a terrible idea that will cripple the productivity of your organization.

A point of clarification

I want to be clear. This post is about company-wide core frameworks. That’s a common framework that is used as the foundation for multiple applications across the company. That is not the same as an application core library.

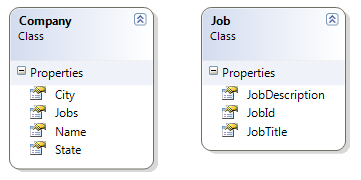

An application core library is usually just the business layer for a single application that can be shared across different assemblies at the application layer. For example, the SquareHire core library is used for the SquareHire public website, the SquareHire ATS (webapp), the SquareHire admin app (another web app), the SquareHire processor, and the SquareHire webapi. All of these are different assemblies that share a common data and service backend. Together they make up the SquareHire application so it makes sense that they would share a common SquareHire core library.

A company wide Core Framework is something different. That’s when a company has multiple applications that do not share a common data and service backend. They may have nothing to do with each other. However, the company wants to standardize software architecture across the company so the decision is made to build every application on top of a common, company-wide Core Framework. Often these Core Frameworks are named after the company, like AcmeInc.core. These company-wide core frameworks force a common architecture and code base on apps that do NOT share a common data and service backend. These are the frameworks this post is about.

How does it happen?

A group of programmers build an application. The application works, the company grows, and it hires more developers. As time goes on the more features are added to the application by developers who really don’t understand the original designs. They just kind of bolt code on that works in the way they’re used to doing things. The new code doesn’t follow the patterns established by the original designers, but it does accomplish the short term goal. Besides, the new approach is probably better than the original design anyway.

More time goes on. The company becomes even more successful which means the app is supporting more users. It’s now maintained by a completely new group of programmers, nobody’s really sure who the original developers were. Each new wave of developers implements features using what they feel are the best practices. The new features hang off the original application like tumors. Growths that are powered by the original organism, but refuse to cooperate with it.

Things go on this way. The company is now so successful that it is developing multiple applications, or maybe they’re acquired and merged with another organization and their software projects. However it happens, the point is that we now have a situation where the organization has multiple teams maintaining multiple applications. Each application has a different architecture and is becoming increasingly more difficult to maintain as new generations of programmers keep bolting stuff on that doesn’t work in the way the original app was designed.

This is the moment when somebody has the bright idea “You know what would make our software development efforts much more efficient and would make all of our applications work much better… if we standardized on a single perfect architecture and made everybody in the entire organization adopt it. In fact, we should create a set of Core libraries, and then every new project will be built on top of them.”

The theory of company-wide Core

On the surface, a Core Framework makes a lot of sense. There are some good arguments for standardizing and implementing a common framework across your organization. Here’s a few of them.

1. New development should be faster/easier

New projects will be much easier to get off the ground. They won’t need to write plumbing code like persistence, they’ll get everything they need from the “Core”.

2. Managing development resources (people) should be easier

If you standardize it should be easier for developers to move between teams. They’ll already be familiar with the architecture.

3. The smartest guys can guide us

Since the “Core” will be designed by the architect (or architects) it will embody the best possible architecture for the organization and it will enforce a set of best practices that will be implemented by less capable programmers who might otherwise go astray.

I may have overdone the sarcasm on that last one but the point is that creating a core really does seem like a good idea on paper.

The reality of company-wide Core

The theory sounds good, but the reality doesn’t work out that way. Here’s some real world observations pulled from my time at real organizations that were in various stages of implementing Core libraries. Keep in mind that the architects and developers at these organizations are some of the smartest that I’ve ever worked with. The problem definitely was not a lack of skill or programming experience.

1. New development should be faster/easier

The Core may actually get the initial phase of a new project off the ground a bit quicker, but it doesn’t last. Quickly developers find that the Core brings with it limitations as well as benefits. It’s not long until the developers of the new application are scheduling time with the architect and the Core team to try and figure out how Core can be changed to accommodate the needs of this new application. Did you catch that? Not only does core not meet the needs of the new app, the new application actually winds up driving changes to Core. This happens for every application that consumes it. What’s really been created is a massive cross-application dependency. Nothing brightens my day like pulling the latest build of core and discovering that my entire application is now broken because some other team decided that we needed a better way to manage transactions or the DI container or any of the other things that find their way into these core libraries.

2. Managing development resources (people) should be easier

This benefit actually does happen to some extent. Different apps in the organization will be structured in a similar way so that it’s easier for developers to move between them. Unfortunately this commonality comes with negative side effects.

The worst effect is that software development becomes uniformly slow and difficult. The Core is a cross application dependency that forces the decisions made by one team onto every other team in the organization. This also means that when your team needs a change made to core, it’s not going to happen quickly. You’re going to have to wait for buy in from the architects and other team leads.

I’ve also seen Core negatively effect organizations’ ability to hire. Core usually goes hand in hand with a dramatic increase in complexity. I’ve heard managers express that it’s incredibly difficult to find programmers and once they do it takes 5 or 6 months for them to be productive because the app architecture is just so complex. Does the Core have to be that complex? No, but for some reason it always is… which brings us to number 3.

3. The smartest guys can guide us

The core is indeed designed by the smartest programmers in the organization. This has to be a good thing right? Wrong. There are two big problems here.

First is the problem of misaligned incentives. When a group of programmers is working together to ship a product, their incentives are all pretty much aligned. They want to ship the product. If the product doesn’t ship it’s a big problem for everyone. When you take your smartest programmers and you pull them out of that team and tell them their priority is to build the core architecture that all other applications will be built on, you’ve just given them a different set of incentives. You just told them that it’s not their job to ship product. Their job may be to create beautiful architecture or develop new techniques for modeling business domains, or researching new technologies, or to instruct developers who are shipping products on how they should be implementing the core. The point is, incentives are no longer aligned.

The second problem is a phenomenon that I’ve labeled Smart Guy Disease. This is another thing I’ve seen repeated in multiple organizations. The basic idea is that the worst mistakes that have the most negative impact on an organization are consistently made by the “smart” programmers not the “dumb” programmers. If you want more detail, I wrote a whole post on it here http://rlacovara.blogspot.com/2014/03/smart-guy-disease.html

Conclusion

The company-wide core framework is an idea that seems like an obvious win to management and architects. But be warned. Here there be monsters. I have never, not once, seen a company-wide architecture solve more problems than it solved. I have seen two examples where company-wide frameworks had hugely negative impacts on their respective organizations. So think twice before starting down that path.

One alternative I would suggest is to maintain a base application code-base. But instead of forcing every application to consume it and inherit from it (thus creating cross-application dependencies), just fork it when you create a new app. That way you have a solid starting point, but you’re free to make changes without worrying about how those changes will affect other apps in your organization.